|

MyCaffe

1.12.2.41

Deep learning software for Windows C# programmers.

|

|

MyCaffe

1.12.2.41

Deep learning software for Windows C# programmers.

|

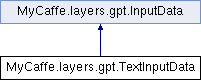

The TextInputData manages character data read in from a text file. Data is tokenized into indexes that reference each character within the vocabulary. More...

Public Member Functions | |

| TextInputData (string strSrc, TokenizedDataParameter.VOCABULARY_TYPE vocabType=TokenizedDataParameter.VOCABULARY_TYPE.CHARACTER, int? nRandomSeed=null, string strDebugIndexFile=null, Phase phase=Phase.NONE) | |

| The constructor. More... | |

| override bool | GetDataAvailabilityAt (int nIdx, bool bIncludeSrc, bool bIncludeTrg) |

| Returns true if data is available at the given index. More... | |

| override Tuple< float[], float[]> | GetData (int nBatchSize, int nBlockSize, InputData trgData, out int[] rgnIdx) |

| Retrieve random blocks from the source data where the data and target are the same but offset by one element where the target is offset +1 from the data. More... | |

| override Tuple< float[], float[]> | GetDataAt (int nBatchSize, int nBlockSize, int[] rgnIdx) |

| Specifies the GetDataAt method - Not used. More... | |

| override List< int > | Tokenize (string str, bool bAddBos, bool bAddEos) |

| Tokenize an input string using the internal vocabulary. More... | |

| override string | Detokenize (int nTokIdx, bool bIgnoreBos, bool bIgnoreEos) |

| Detokenize a single token. More... | |

| override string | Detokenize (float[] rgfTokIdx, int nStartIdx, int nCount, bool bIgnoreBos, bool bIgnoreEos) |

| Detokenize an array into a string. More... | |

Public Member Functions inherited from MyCaffe.layers.gpt.InputData Public Member Functions inherited from MyCaffe.layers.gpt.InputData | |

| InputData (int? nRandomSeed=null) | |

| The constructor. More... | |

Properties | |

| override List< string > | RawData [get] |

| Return the raw data. More... | |

| override uint | TokenSize [get] |

| The text data token size is a single character. More... | |

| override uint | VocabularySize [get] |

| Returns the number of unique characters in the data. More... | |

| override char | BOS [get] |

| Return the special begin of sequence character. More... | |

| override char | EOS [get] |

| Return the special end of sequence character. More... | |

Properties inherited from MyCaffe.layers.gpt.InputData Properties inherited from MyCaffe.layers.gpt.InputData | |

| abstract List< string > | RawData [get] |

| Returns the raw data. More... | |

| abstract uint | TokenSize [get] |

| Returns the size of a single token (e.g. 1 for character data) More... | |

| abstract uint | VocabularySize [get] |

| Returns the size of the vocabulary. More... | |

| abstract char | BOS [get] |

| Return the special begin of sequence character. More... | |

| abstract char | EOS [get] |

| Return the special end of sequence character. More... | |

Additional Inherited Members | |

Protected Attributes inherited from MyCaffe.layers.gpt.InputData Protected Attributes inherited from MyCaffe.layers.gpt.InputData | |

| Random | m_random |

| Specifies the random object made available to the derived classes. More... | |

The TextInputData manages character data read in from a text file. Data is tokenized into indexes that reference each character within the vocabulary.

For example if the data source contains the text "a red fox ran.", the vocabulary would be:

Vocabulary: ' ', '.', 'a', 'd', 'e', 'f', 'o', 'n', 'r' Index Vals: 0, 1, 2, 3, 4, 5, 6, 7, 8

Tokenizing is the process of converting each input character to its respective 'token' or in this case, index value. So, for example, 'a' is tokenized as index 2; 'd' is tokenized as index 3, etc.

Definition at line 500 of file TokenizedDataLayer.cs.

| MyCaffe.layers.gpt.TextInputData.TextInputData | ( | string | strSrc, |

| TokenizedDataParameter.VOCABULARY_TYPE | vocabType = TokenizedDataParameter.VOCABULARY_TYPE.CHARACTER, |

||

| int? | nRandomSeed = null, |

||

| string | strDebugIndexFile = null, |

||

| Phase | phase = Phase.NONE |

||

| ) |

The constructor.

| strSrc | Specifies the data source as the filename of the text data file. |

| vocabType | Specifies the vocabulary type to use. |

| nRandomSeed | Optionally, specifies a random seed for testing. |

| strDebugIndexFile | Optionally, specifies the debug index file containing index values in the form 'idx = #', one per line. |

| phase | Specifies the currently running phase. |

Definition at line 520 of file TokenizedDataLayer.cs.

|

virtual |

Detokenize an array into a string.

| rgfTokIdx | Specifies the array of tokens to detokenize. |

| nStartIdx | Specifies the starting index where detokenizing begins. |

| nCount | Specifies the number of tokens to detokenize. |

| bIgnoreBos | Specifies to ignore the BOS token. |

| bIgnoreEos | Specifies to ignore the EOS token. |

Implements MyCaffe.layers.gpt.InputData.

Definition at line 694 of file TokenizedDataLayer.cs.

|

virtual |

Detokenize a single token.

| nTokIdx | Specifies an index to the token to be detokenized. |

| bIgnoreBos | Specifies to ignore the BOS token. |

| bIgnoreEos | Specifies to ignore the EOS token. |

Implements MyCaffe.layers.gpt.InputData.

Definition at line 680 of file TokenizedDataLayer.cs.

|

virtual |

Retrieve random blocks from the source data where the data and target are the same but offset by one element where the target is offset +1 from the data.

| nBatchSize | Specifies the batch size. |

| nBlockSize | Specifies teh block size. |

| trgData | Specifies the target data provided to check for data availability at the selected data index. |

| rgnIdx | Returns an array of indexes of the data returned. |

Implements MyCaffe.layers.gpt.InputData.

Definition at line 599 of file TokenizedDataLayer.cs.

|

virtual |

Specifies the GetDataAt method - Not used.

| nBatchSize | Specifies the number of blocks in the batch. |

| nBlockSize | Specifies the size of each block. |

| rgnIdx | Not used. |

| NotImplementedException |

Implements MyCaffe.layers.gpt.InputData.

Definition at line 656 of file TokenizedDataLayer.cs.

|

virtual |

Returns true if data is available at the given index.

| nIdx | Specifies the index to check |

| bIncludeSrc | Specifies to include the source in the check. |

| bIncludeTrg | Specifies to include the target in the check. |

Implements MyCaffe.layers.gpt.InputData.

Definition at line 585 of file TokenizedDataLayer.cs.

|

virtual |

Tokenize an input string using the internal vocabulary.

| str | Specifies the string to tokenize. |

| bAddBos | Add the begin of sequence token. |

| bAddEos | Add the end of sequence token. |

Implements MyCaffe.layers.gpt.InputData.

Definition at line 668 of file TokenizedDataLayer.cs.

|

get |

Return the special begin of sequence character.

Definition at line 712 of file TokenizedDataLayer.cs.

|

get |

Return the special end of sequence character.

Definition at line 720 of file TokenizedDataLayer.cs.

|

get |

Return the raw data.

Definition at line 554 of file TokenizedDataLayer.cs.

|

get |

The text data token size is a single character.

Definition at line 565 of file TokenizedDataLayer.cs.

|

get |

Returns the number of unique characters in the data.

Definition at line 573 of file TokenizedDataLayer.cs.