This tutorial will guide you through the steps to create and train a small GPT based transformer model as described by [1] to learn a Shakespeare sonnet.

Step 1 – Create the Project

In the first step we need to create the minGPT project that uses a MODEL based dataset. MODEL based datasets rely on the model itself to collect data used for training.

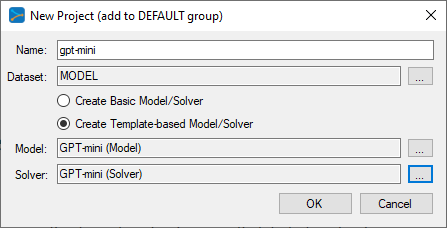

First select the Add Project (![]() ) button at the bottom of the Solutions window, which will display the New Project dialog.

) button at the bottom of the Solutions window, which will display the New Project dialog.

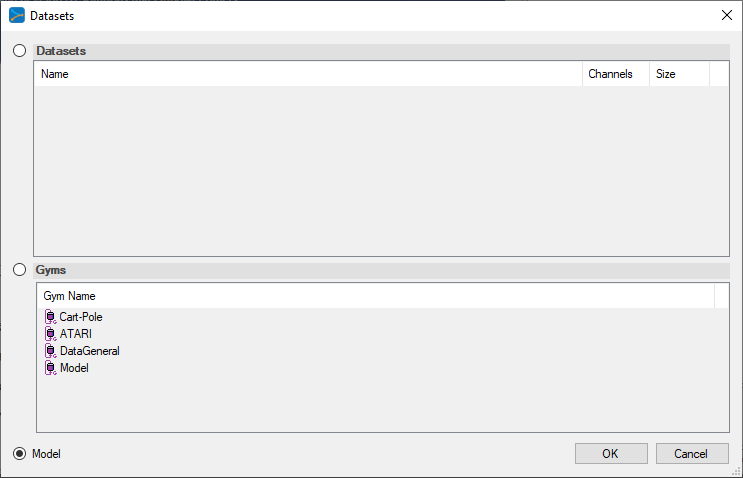

To select the MODEL dataset, click on the ‘…’ button to the right of the Dataset field and select the MODEL radio button at the bottom of the dialog.

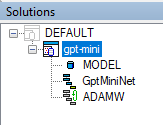

Upon selecting OK on the New Project dialog, the new minGPT project will be displayed in the Solutions window.

Step 2 – Review the Model

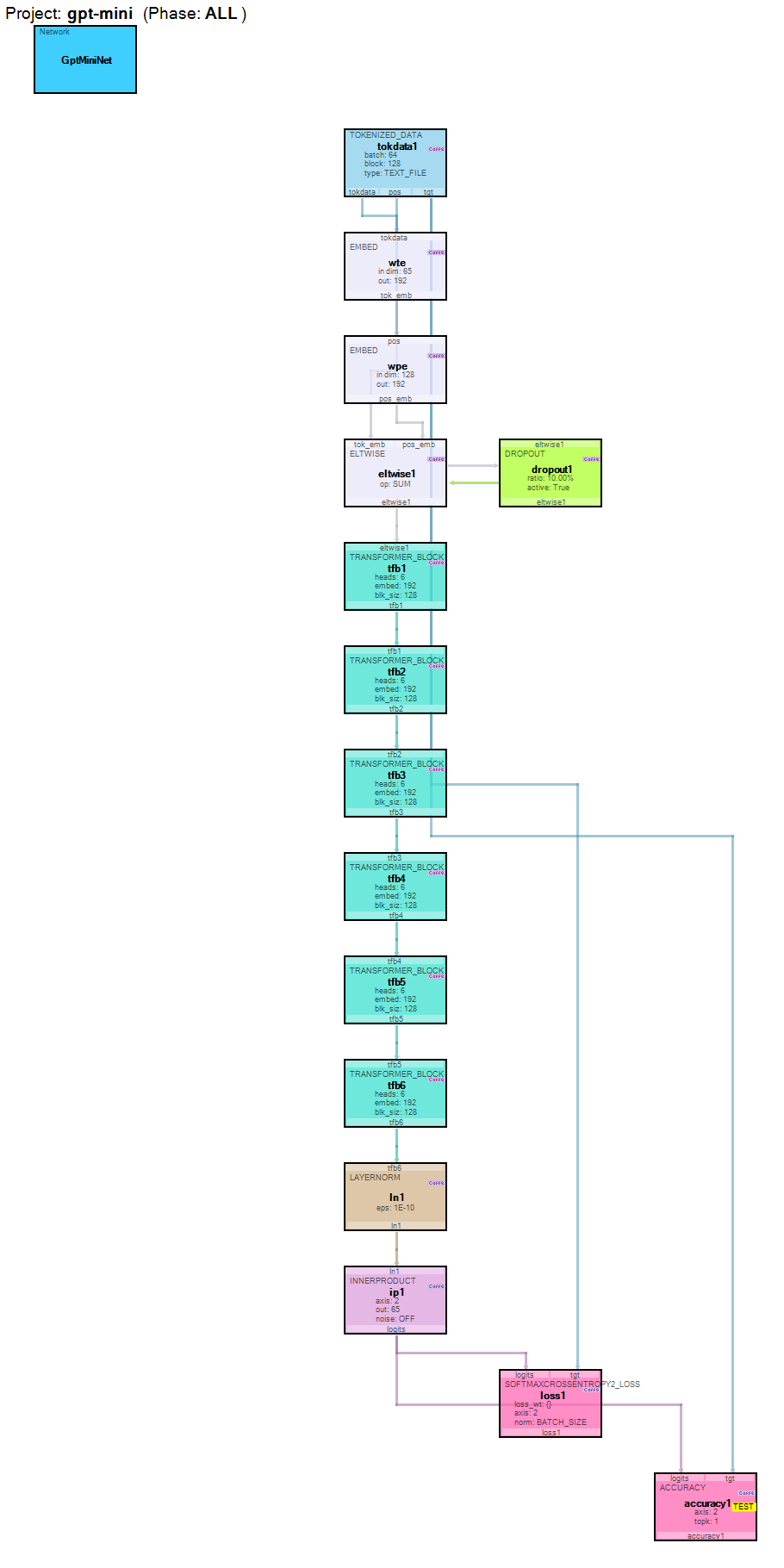

Now that you have created the project, lets open up the model to see how it is organized. To review the model, double click on the GptMinNet model within the new gpt-mini project, which will open up the Model Editor window.

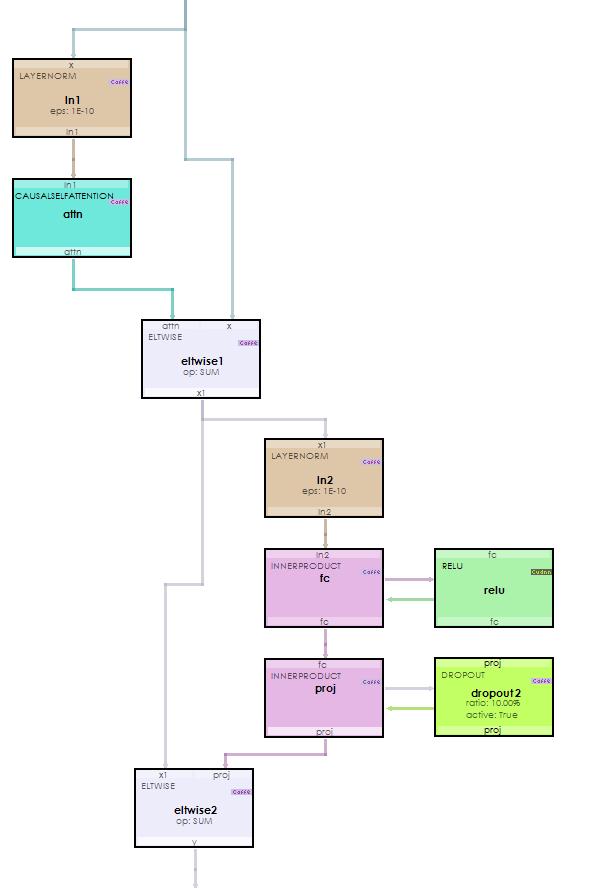

The GPT model feeds tokenized data (created by the TokenizedDataLayer) into two EmbedLayers that learn the embeddings of the token data and a corresponding positional data. The embeddings are then added together and fed into a stack of six TransformerBlockLayers which internally each use a CausalSelfAttentionLayer to learn context. The last TransformerBlockLayer feeds the data into a LayerNormLayer for normalization and on to an InnerProductLayer that then produces the logits. The Logits are converted into probabilities using a SoftmaxLayer.

Each TransformerBlockLayer uses several additional layers internally.

The most important of these layers is the CausalSelfAttentionLayer used to learn context.

Step 3 – Training

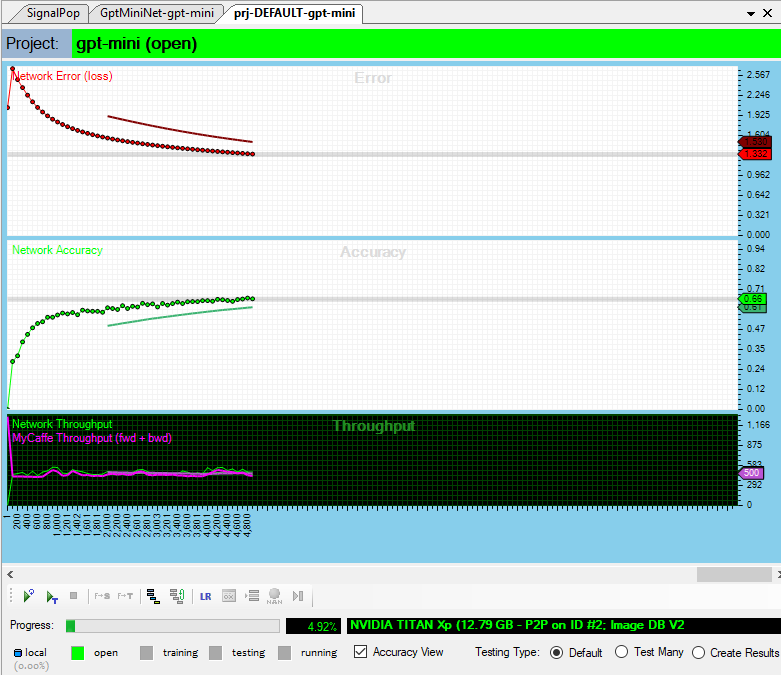

The training step uses an AdamWSolver for optimization where weight decays are applied via the adamw_decay rate of 0.1 and the solver is run with no regularization. The base learning rate is set to 0.0005, and the optimization uses a fixed learning rate policy. The Adam momentums are set to B1 = 0.9 and B2 = 0.95 with a delta (eps) = 1e-8. Unlike the original minGPT project, we did not use gradient clipping and therefore set clip_gradients = -1.

Initially we trained a model on an NVIDIA TitanX and the model used around 8GB of the TitanX’s 12GB of GPU memory.

Each training cycle (fwd + bwd) on the NVIDIA TitanX (with TCC=on) completes in a little over 500ms. However, for much faster results, the NVIDIA RTX A6000 is recommended, as it trains at almost 1/2 the time, completing each training cycle in around 242 ms. Both setups used a batch size of 64 and a block size of 128.

Step 4 – Running the Model

Once trained, you are ready to run the model to create a new Shakespeare like sonnet. To do this, select the Test Many testing type (radio button in the bottom right of the Project window) and press the Test (![]() ) button.

) button.

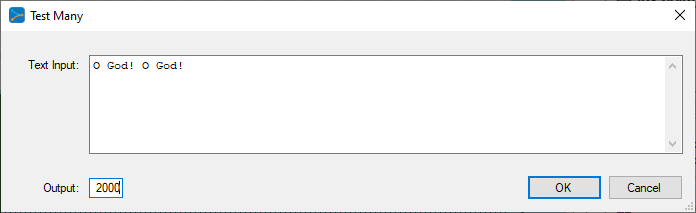

From the Test Many input dialog, enter the phrase “O God! O God”, change the output characters to 2000 and select OK.

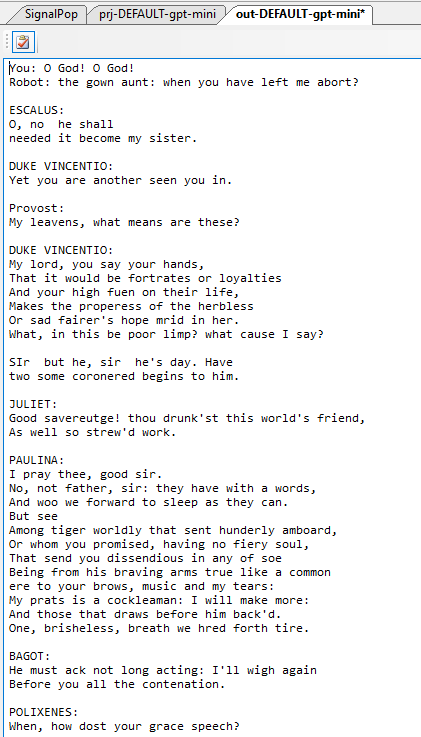

Once completed, the results are displayed in the output window.

Congratulations! You have now created your first Shakepeare sonnet using GPT running in the SignalPop AI Designer!

To see the SignalPop AI Designer in action with other models, see the Examples page.

[1] Andrej Karpathy GitHub: karpathy/minGPT, 2022, GitHub